Vibecoding in practice: how AI and humans built a real iPhone app together

How HockeyUmpire became the beste app for Hockey Umpires

There’s a moment in almost every product journey where you think:

this is too complex—I need a developer.

For a long time, that assumption was simply true. Complexity meant specialization, and specialization meant bringing in someone who could translate your ideas into code.

But over the past months, I started to notice something shifting—not in the complexity itself, but in how that complexity could be handled.

I built a fully functional iPhone + Apple Watch app—HockeyUmpire, a match control app for field hockey umpires—without being a traditional developer. Not by simplifying the product or lowering the bar, but by approaching the entire process differently. Instead of trying to control every detail myself, I focused on structuring the system in such a way that AI could reliably execute within it.

That shift is what I now refer to as vibecoding.

Not as a buzzword, but as a working method that changes how you think about building products.

What vibecoding actually means

Vibecoding is often misunderstood as “just prompting ChatGPT,” but in practice it feels much closer to orchestrating a system than issuing instructions.

You’re not writing every line of code yourself. Instead, you define intent, structure, and constraints, and you let different AI systems operate within that clearly defined space. The quality of the output is therefore not dependent on a single prompt, but on how well the system around it is designed.

In my case, the stack behind HockeyUmpire evolved into a layered setup:

- ChatGPT for strategy, architecture, and reasoning

- Codex for precise implementation and refactoring

- Xcode for compilation, debugging, and validation

- A set of dedicated agents for translation, QA, security, and website development

What became clear quite quickly is that the difference between random AI output and something you can actually build on is subtle but fundamental. AI will always generate output, but vibecoding is about creating a system where that output becomes predictable, testable, and ultimately reliable enough to ship.

The product: HockeyUmpire

Before going deeper into the process, it’s important to anchor this in something real.

HockeyUmpire is not a prototype or a side project that lives in a simulator. It is a production-ready app that I use myself on the field as a referee, and that is designed to support real match situations where reliability matters more than anything else.

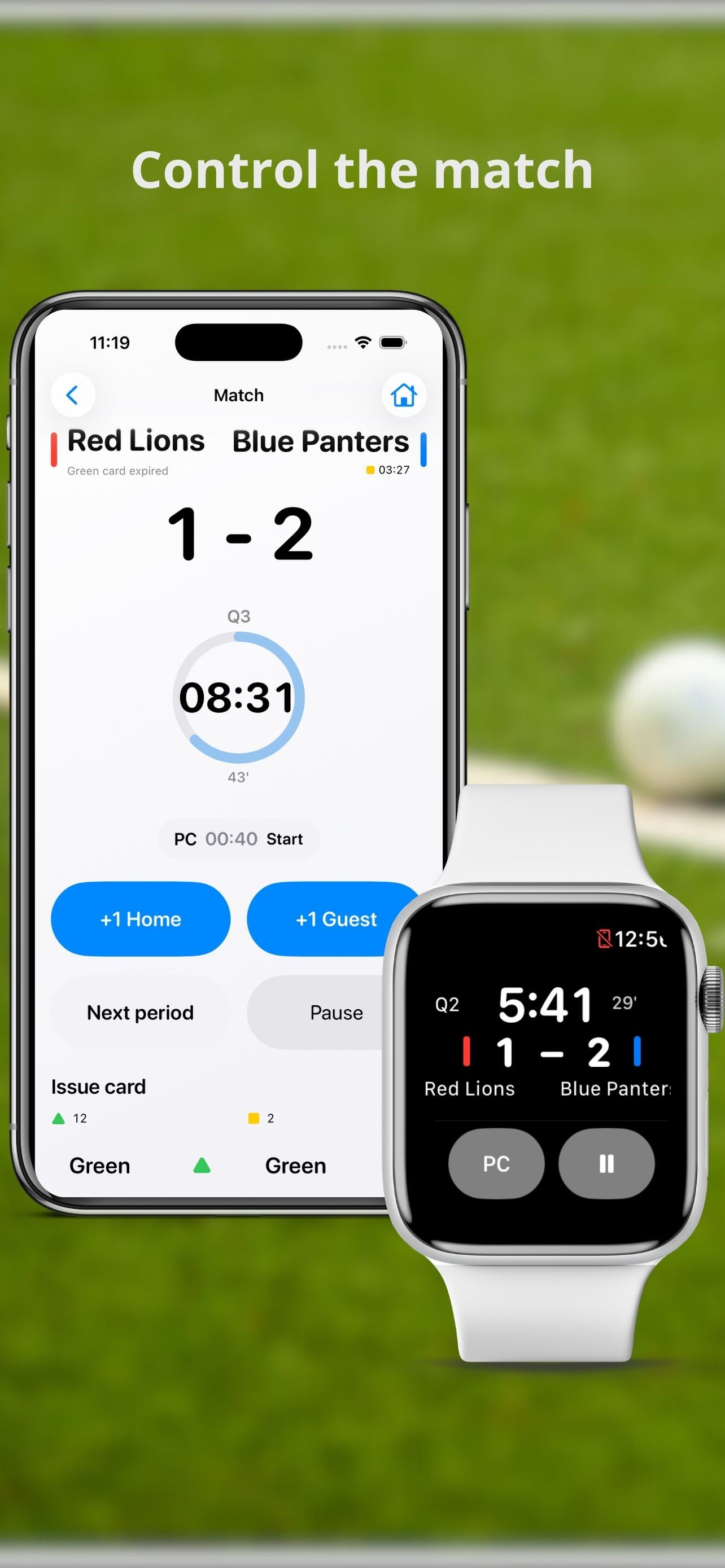

The app combines a watch-first approach with a broader ecosystem:

- Apple Watch-first match control during the game

- iPhone-based setup, history, and analytics

- Full match management including score, cards, penalty corners, and shoot-outs

- GPS tracking and Apple Health integration for physical and positional insights

- Shareable match reports and umpire performance data

- Widgets and complications for quick access

- An offline-first architecture with local persistence, because matches don’t wait for connectivity

If I had to summarize it in one sentence, I would say:

HockeyUmpire is a match control app for field hockey umpires on Apple Watch and iPhone, designed to give you clarity and control before, during, and after the match.

But the more important ambition sits one layer deeper. The goal is not to be “a useful app,” but to become the standard app referees instinctively open before every match. That changes how you think about product decisions, because it forces you to prioritize reliability, simplicity, and trust over feature volume.

Core functionality is free, which lowers the barrier to entry, while Pro features extend the experience for referees who want deeper insight into their performance. That balance—accessible at the start, powerful over time—turned out to be essential.

Step 1: roadmap as the control layer

One of the first things I learned is that most AI-driven development doesn’t fail in the code—it fails in the absence of structure before any code is written.

It’s tempting to start with “let’s build this feature,” but that immediately introduces ambiguity, and ambiguity is where AI starts to drift.

Instead, I treated the roadmap as a control layer for the entire system. Each phase had a clear purpose and built on the previous one:

- Phase 1 focused on core match control

- Phase 2 introduced reliability and state persistence

- Phase 3 expanded into more complex match flows

- Phase 4 added analytics and sharing

- Phase 5 introduced Pro features and monetization

For every feature, I forced myself to think through the details that are easy to skip:

- What is the exact user flow?

- What happens in edge cases?

- What happens when something fails?

- How does this behave across iPhone and Watch?

What I realized is that AI doesn’t struggle with syntax or logic in isolation—it struggles with ambiguity in context. The more explicitly you define that context, the more consistent and useful the output becomes.

Step 2: agents instead of prompts

Another shift that made a significant difference was moving away from the idea of “one conversation” with ChatGPT, and instead thinking in terms of dedicated agents with clear responsibilities.

Each agent was focused on a specific part of the system.

The architecture agent helped define how the app should be structured, ensuring a clear separation between UI and logic, and preventing the kind of fragile codebase that becomes unmanageable after a few iterations.

The coding agent—primarily using Codex—handled the actual implementation. This is where precision mattered most. Instead of vague instructions like “fix the timer,” I learned to work with very targeted prompts such as: update TimerManager.swift to recalculate remaining time using Date differencing and remove background loops. That level of specificity turns AI from a helper into something that behaves much more like a senior engineer working within constraints.

Reliability required its own focus. I explicitly introduced a safety-oriented agent that looked at failure scenarios, startup behavior, and data integrity. One principle guided all decisions here: the app must always start, even if local data is corrupted. That led to defensive loading strategies, fallback modes, and safe reset paths. AI doesn’t naturally prioritize these things—you have to enforce them.

Timers and system behavior turned out to be more complex than expected. Instead of relying on continuous background execution—which iOS aggressively limits—we shifted to a model based on stored timestamps, recalculation on resume, and local notifications for precision. That combination resulted in accurate timing with minimal resource usage, even when the app is backgrounded or the device is locked.

The Apple Watch introduced another layer of constraints. Performance limitations, throttling, and runtime restrictions meant that the entire system had to be optimized for efficiency. Event-driven updates, minimal processing, and persistent state became essential. This is also where the “watch-first” philosophy became real: the Watch is not an extension, it is the primary interface during the match.

Localization was another interesting case. Supporting multiple languages is relatively easy in theory, but maintaining consistency and correct terminology across languages is not. I ended up creating a dedicated translation agent to handle this, ensuring that hockey-specific terminology remained accurate and consistent across all supported languages.

Even the website followed the same principle. Instead of treating it as a separate project, I approached it as another agent-driven system, focused on clarity rather than cleverness. Clear definitions of what the app is, who it is for, and when you use it turned out to be far more effective for both users and search engines than any form of keyword optimization.

Step 3: Codex as a force multiplier

Working with Codex became significantly more effective once I stopped treating it as a generic code generator and started treating it as a constrained expert.

The biggest shift was moving toward file-scoped instructions, where each request was tied to a specific file and a specific change. That alone reduced ambiguity dramatically.

At the same time, I accepted that the process is inherently iterative. You generate code, compile it, inspect logs, and refine your instructions. That loop is not inefficiency—it is the system learning through feedback.

To make that loop workable, structured logging became essential. By introducing consistent log prefixes such as [WATCH][VM], [PERSIST][BOOT], and [WC][SYNC], I was able to trace behavior across different parts of the system and understand how changes propagated. Without that visibility, AI-generated systems quickly become opaque and difficult to debug.

Step 4: human control is the real system

If there is one misconception about AI-driven development, it is the idea that the human becomes less important.

In reality, the opposite happens.

AI can generate solutions, but it cannot decide whether those solutions are appropriate in context. It doesn’t understand trade-offs in the same way, and it doesn’t feel when something is “off” from a user perspective.

That responsibility remains entirely human.

In practice, this meant constantly evaluating output not just on whether it worked, but on whether it made sense for the user. I often found myself rewriting prompts along the lines of: the app currently behaves like this, but the expected behavior is…, followed by a detailed explanation of the use case.

That process—explaining intent, rejecting technically correct but contextually wrong solutions, and maintaining consistency across the product—is where most of the real work happens.

Step 5: why this works—and when it fails

Vibecoding works because it combines two complementary strengths.

AI is extremely good at structured execution when the boundaries are clear. Humans are extremely good at contextual judgment, prioritization, and understanding what actually matters in a real-world situation.

The model breaks down when those boundaries disappear. If constraints are unclear, if output is accepted without critical evaluation, or if the overall architecture is not actively maintained, the system quickly starts to drift.

Direction is not optional—it is the foundation.

Can non-developers really build apps this way?

The short answer is yes, but that answer needs nuance.

You don’t need to be a traditional developer, but you do need to think like a system designer. That includes structuring flows, reasoning about edge cases, and maintaining consistency across the entire product.

In that sense, the barrier has not disappeared—it has shifted. It is no longer primarily about writing code, but about understanding systems and making good decisions within them.

The bigger shift

What happened with HockeyUmpire is not a one-off experiment.

It reflects a broader shift in how products can be built.

We are moving toward a model where a single person can create what previously required a team, not by doing more work themselves, but by orchestrating systems that handle execution. AI becomes the engine, but the human remains responsible for direction.

In that context, vibecoding is not a shortcut or a trick.

It is a discipline that combines structure, intent, and continuous evaluation.

Final thought

If there is one takeaway from this process, it is this:

Don’t try to outcode AI. Learn to direct it.

Because the real leverage is no longer in writing code faster, but in building better systems with clearer intent and stronger constraints.

And sometimes, if you do that well enough, it leads to something you didn’t expect at the start:

A production-ready app, used on real hockey fields, supporting real referees in real matches—

and gradually evolving into one of the most complete and practical apps for hockey umpires available today.

Not built despite complexity, but by learning how to work with it.